Root Cause Analysis - Part 3: Securing AI with Root-Level Fixes

Shireen Pereira

Securin Team

Oct 24, 2025

In Part 2 of our Root Cause of AI Weaknesses series, we examined how attackers exploit vulnerabilities like prompt injection in EchoLeak (CVE-2025-32711, CWE-77Command Injection) and SSRF in NVIDIA Triton (CVE-2025-23319, CWE-918 Server-Side Request Forgery (SSRF)), turning scaled AI systems into battlegrounds for data theft and remote code execution. Securin’s root cause analysis, mapped to CWE (Common Weakness Enumeration), exposed how inherited software flaws and AI-native weaknesses compound risks and revealed the urgent need for AI vulnerability intelligence.

In this final part, we move from exposure to defense—arming organizations with root-level protection strategies such as rigorous input sanitization, access control enforcement, and secure deserialization. We also examine current and emerging attack trends, translating them into practical guidelines for defending against AI-driven cyber risk. These defenses not only help enterprises secure their AI environments today but also provide a blueprint for LLM developers to build secure-by-design AI frameworks capable of outpacing attackers in 2025 and beyond.

Key Defense Priorities For Industries

To secure AI systems, organizations must prioritize the most exploited weaknesses—memory safety, input validation, and information exposure—before tackling less urgent flaws. Securin’s recommendations combine advanced threat detection, robust data classification, continuous monitoring, and rapid incident response with sector-specific and universal defense priorities:

Key Defense Priorities

Input Sanitization | Validate all inputs to block malicious data, addressing CWE-20 issues seen in PyTorch’s unsafe model loading (CVE-2025-32434). This prevents injection attacks like those in EchoLeak. |

Access Controls | Limit permissions to prevent unauthorized access, tackling CWE-306 flaws like those in Anthropic MCP (CVE-2025-49596). This ensures only trusted users or processes interact with AI systems. |

Secure Deserialization | Use safe loaders to prevent harmful code execution, addressing CWE-502 risks in frameworks like PyTorch. This stops attackers from embedding malicious code in models. |

Industry-Specific Priorities

Healthcare | Prioritize input validation (CWE-20) and authentication (CWE-306) to protect patient data from ransomware exploiting weak access controls. |

Financial Services | Focus on deserialization (CWE-502) and code injection (CWE-77) to prevent fraud and compliance breaches in AI-driven platforms. |

Critical Infrastructure | Deploy memory safety programs (CWE-119) to counter nation-state actors exploiting low-level flaws for strategic advantage.CWE-based root cause analysis helps organizations prioritize these defenses, disrupting attacker strategies like APTs and ransomware. |

Evolving Threats: Mapping the AI Attack Landscape

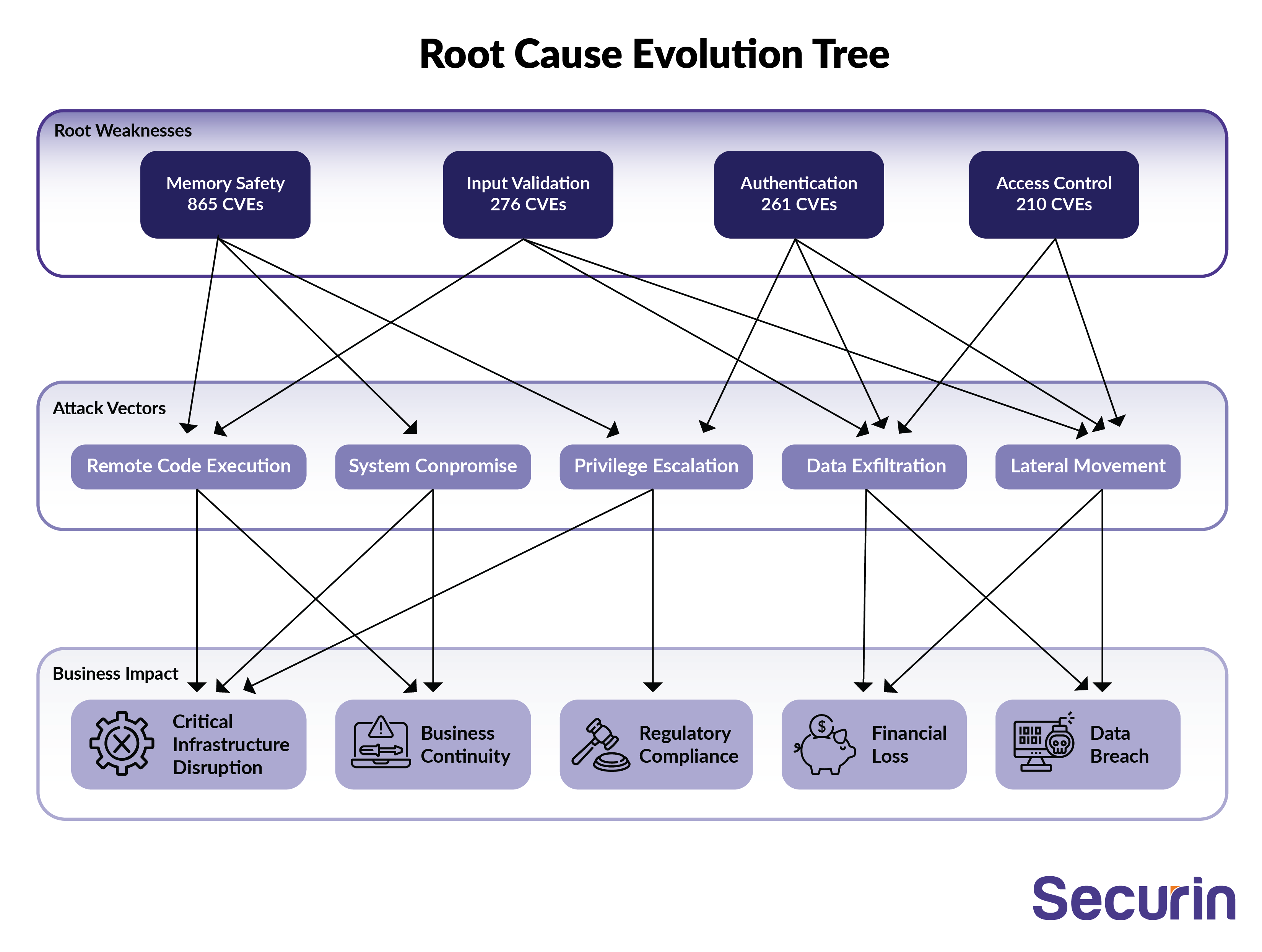

The evolution of exploitation preferences shows how adversaries adapt over time, with patterns emerging in generational weaknesses, motive-driven specialization, and the systematic distribution of vulnerabilities.

Securin’s Root Cause Evolution Tree (visualized below) maps long-standing flaws to their origins, showing why they persist, how they evolve into new attack vectors, and where adversaries are likely to strike next. For example, memory safety issues—linked to 865 CVEs (40% of our dataset)—remain entrenched in core systems built with unsafe languages like C/C++. Attackers are now adapting these legacy weaknesses into AI-specific threats, such as prompt injection (CWE-77). Current trends reveal how these inherited flaws fuel the next generation of AI-targeted exploits.

• Long-Standing Flaws: Memory safety issues dominate (ranking #1, #4, #7, #9 in Top 10 CWEs) due to legacy software in operating systems and runtimes.

• Attacker Specialization: Nation-state APTs exploit memory corruption (such as CWE-119) for persistent access; ransomware targets input validation (CWE-20); AI/ML attackers use code injection (CWE-77) to compromise models.

• Vulnerability Patterns: Memory flaws cluster in low-level systems, while injection flaws hit web and AI apps, with deserialization surges signaling new attacker tools.

Future AI Attack Trends

- AI-assisted vulnerability discovery will increase exploitation of complex weakness combinations that human analysts traditionally miss.

- Attacks on AI/ML frameworks will target training data and model integrity through path traversal and code injection, driven by strong economic incentives such as premium bounty rates.

- Cloud-native weaknesses in container orchestration and serverless platforms will become primary targets as cloud adoption accelerates.

- IoT device vulnerabilities will be exploited to build large-scale botnets, enabling DDoS and ransom campaigns against critical infrastructure.

- Model poisoning attacks will exploit input validation flaws in AI/ML pipelines, compromising both training datasets and inference results.

How to Defend Against AI-Driven Cyber Risk

With the current and future threat landscape in mind, Securin has developed a set of guidelines to strengthen AI security. Accountability should be shared across the ecosystem—from end users to system architects, including LLM developers and no-code platform providers—and must be reinforced through established frameworks.

• MITRE CWE (e.g., CWE-1426: Improper AI Output Validation) enables targeted mitigation during development.

• ISO/IEC 42001 (Dec 2023) establishes global standards for AI governance and lifecycle security.

• ISO/IEC 42005 (May 2025) provides methods for impact assessment, transparency, and trust.

Building on these frameworks, Securin’s guidelines translate standards into practice, helping organizations implement structured, auditable, and repeatable defense strategies to mitigate AI-related threats.

• Implement weakness-aware vulnerability scanning that prioritizes CVEs based on underlying weakness patterns, not just CVSS scores.• Deploy runtime protection mechanisms targeting memory safety weaknesses in legacy systems.• Establish threat actor attribution workflows correlating exploitation patterns with known attacker behaviors.• Integrate secure development lifecycle practices, emphasizing weakness prevention during design rather than post-deployment patching.• Adopt threat modeling frameworks incorporating the exploitation preferences of relevant threat actor groups.• Implement supply chain security programs evaluating third-party software based on weakness distribution patterns.

Securing AI at the Root: Building Trust by Design

Based on our exploration of root cause analysis, one pattern is clear: weaknesses in AI libraries and frameworks are the engine driving many of today’s attacks. From hidden flaws in AI foundations (Part 1), to real-world exploits at scale (Part 2), and finally to root-level defenses (Part 3), this series has traced the full lifecycle of AI vulnerabilities—and how they can be broken. Drawing on Securin’s root cause analysis, organizations can move beyond reactive patching toward resilient, secure-by-default AI systems that anticipate and withstand tomorrow’s threats.

The way forward demands shared responsibility. From end users to system architects—including LLM developers and no-code platform providers—every stakeholder must act. Proactive steps like securing supply chains, deploying runtime defenses against memory exploits, and adopting phased, auditable frameworks are essential. Only by aligning strong security with economic incentives can we dismantle the exploitation cycle and build AI that is not just powerful, but secure by design and trustworthy.