Root Cause Analysis Part 2: Scaling AI Through Exploding Risks and Evolving Attacks

Shireen Pereira

Securin Team

Oct 10, 2025

In Part 1 of our Root Cause of AI Weaknesses series, we showed how long-standing software flaws, such as improper input validation (CWE-20), and AI-specific weaknesses, like prompt injection and Server-Side Request Forgery (SSRF), expand attack surfaces and threaten both organizations using AI and AI systems themselves. Cases like EchoLeak’s zero-click leaks (CVE-2025-32711, CWE-77 Comand Injection) and Triton’s SSRF exploit (CVE-2025-23319, CWE-918 SSRF) revealed how these vulnerabilities persist in modern frameworks. By mapping software flaws to their Common Weakness Enumerations (CWEs), Securin uncovered the root causes behind these risks—helping organizations understand what must be fixed to build stronger defenses.

Catch up on Part 1 of the Root Cause of AI Weaknesses. Now, in Part 2, we shift from what the flaws are to how attackers use them—exposing the techniques behind exploitation, the amplified risks of scaling AI, and why AI vulnerability intelligence is essential to stay ahead of fast-evolving threats.

How Attackers Hijack AI: Deadly Attack Vectors

Attackers target weaknesses in AI systems, such as poor input checks or unsafe data handling, to launch devastating attacks. These weaknesses, mapped to CWE categories, allow attackers to exploit AI with minimal access—often just a malicious file or network connection. Here’s how they do it:

• Symlink and Path Attacks in Anthropic MCP: In CVE-2025-53109 (CWE-61 Symlink Following) and CVE-2025-53110 (CWE-22 Path Traversal), attackers use crafted shortcuts (symlinks) or manipulated file paths in tools like Claude Desktop to escape security boundaries. This lets them read or write sensitive system files, like macOS Launch Agents, enabling remote code execution (RCE) where attackers run harmful code on your system.

• Information Leaks in NVIDIA Triton: CVE-2025-23319 (CWE-119 Improper Memory Operations) chains sloppy error messages with shared memory manipulation, allowing attackers to send malicious inference requests and gain unauthenticated RCE, taking over the system without login credentials.

• Unsafe Model Loading in PyTorch: CVE-2025-32434 (CWE-502 Deserialization of Untrusted Data) lets attackers embed malicious code in AI models loaded via PyTorch’s torch.load, even with safety settings (weights_only=True), triggering RCE in training pipelines.

• Prompt Injection in EchoLeak: In Microsoft 365 Copilot (CVE-2025-32711, CWE-77 Command Injection), attackers hide malicious instructions in emails, tricking the AI into leaking OneDrive files or Teams messages without user interaction.

• SSRF in Azure OpenAI: CVE-2025-53767 (CWE-918 SSRF) allows attackers to make the AI server request internal resources, stealing sensitive metadata like access tokens.

• Sandbox Escapes in Hugging Face: CVE-2025-5120 (CWE-284 Improper Access Control) exploits weak function restrictions in Smolagents, letting attackers run arbitrary code outside the sandbox—a secure environment meant to isolate code.

These attacks often need only a single weak point, like untrusted data or network exposure, making AI systems vulnerable to supply chain attacks, where malicious code sneaks in through third-party components.

Scaling AI Systems Amid Escalating Risks

When AI systems scale, the risks scale too. As AI systems grow to handle enterprise-scale tasks, small vulnerabilities become massive risks. A single flaw can disrupt millions of users or expose sensitive data across industries. Key impacts include:

• Model Theft and Tampering: A single RCE in NVIDIA Triton (CVE-2025-23319, CWE-119 Improper Restriction of Operations within the Bounds of a Memory Buffer) or TensorRT-LLM (CVE-2025-23254, CWE-20 Improper Input Validation) could let attackers steal AI models, alter their outputs (e.g., generating false results), or hijack networks, affecting millions of users in applications like autonomous vehicles.

• Data Breaches in Cloud AI: In Azure OpenAI, SSRF (CVE-2025-53767, CWE-918 SSRF) can escalate to token theft, exposing sensitive data like patient records in healthcare systems relying on cloud AI.

• Zero-Click Leaks in M365: EchoLeak (CVE-2025-32711, CWE-77 Command Injection) risks leaking corporate secrets across Microsoft 365 environments, with no user action required, impacting global organizations.

• System Crashes and Bias: TensorFlow’s unbounded recursion flaw (CVE-2025-0649, CWE-674 Use of Non-Canonical URL Paths for Authorization Decisions) causes denial-of-service (DoS) crashes, halting AI services. Other risks include model poisoning, where attackers manipulate training data to produce biased or harmful outputs, or regulatory fines from data breaches.

With AI processing petabytes of data, these exploits could lead to massive breaches, costly penalties, and eroded trust in AI-driven decisions, like those in financial or medical systems.

The Need for AI Vulnerability Intelligence

Traditional security tools, like firewalls or antivirus, struggle with AI-specific threats like prompt injection (CWE-77) or unsafe deserialization (CWE-502). “AI Vulnerability Intelligence” is a new approach that uses proactive scanning, threat modeling, and tailored defenses to identify risks in AI frameworks like Anthropic MCP or PyTorch. It helps organizations:

• Detect Risks Early: Spot vulnerabilities before attackers exploit them, using tools that understand AI workflows.

• Map Threats with CWE: Link flaws to CWE categories (e.g., CWE-918 for SSRF) to pinpoint root causes.

• Apply Tailored Defenses: Implement protections like least-privilege execution (limiting what code can do) and safe loaders (restricting harmful data imports).

As AI becomes central to business operations, this intelligence is essential to protect innovation from evolving exploits and maintain trust.

Outsmarting Threat Actors and Ransomware Strategies: Key Defense Priorities

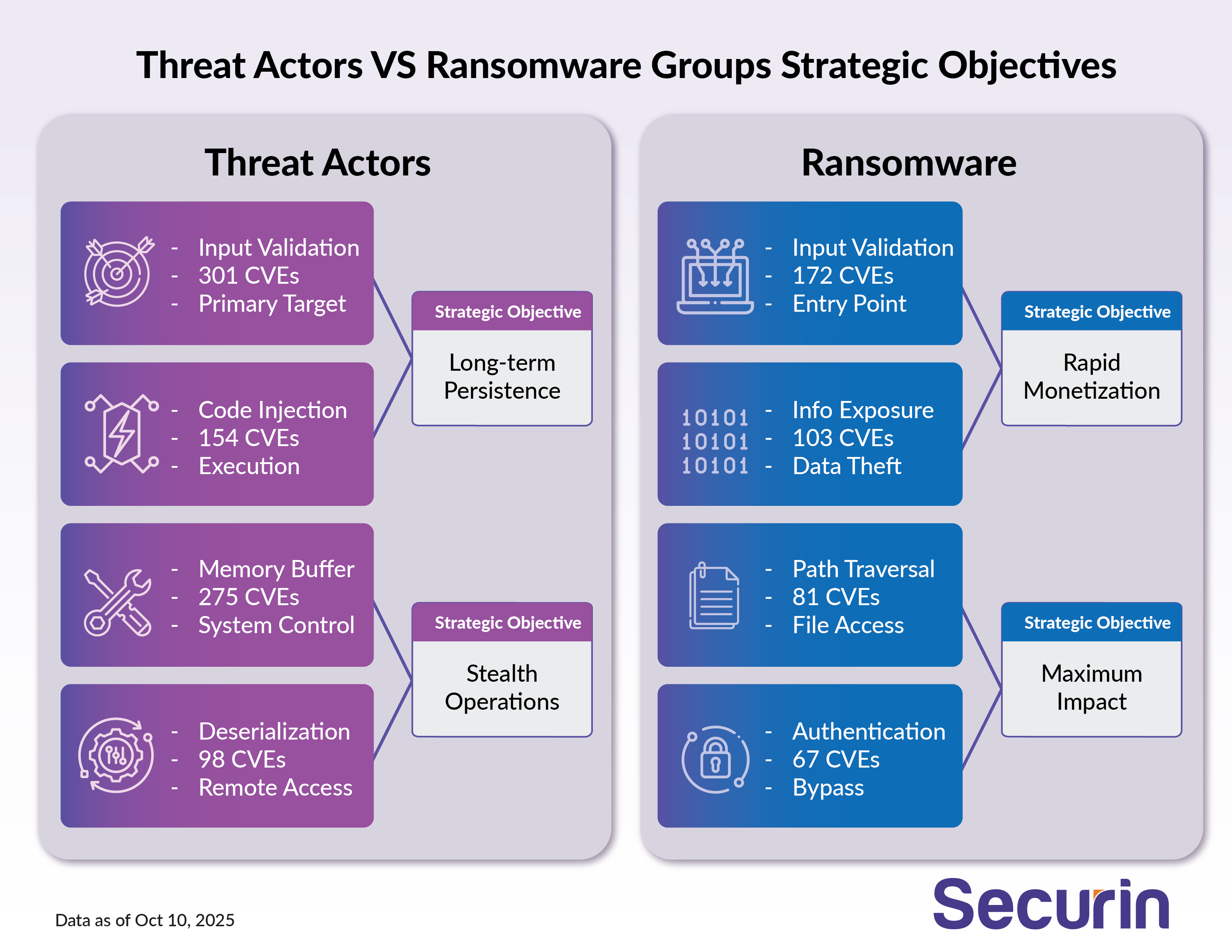

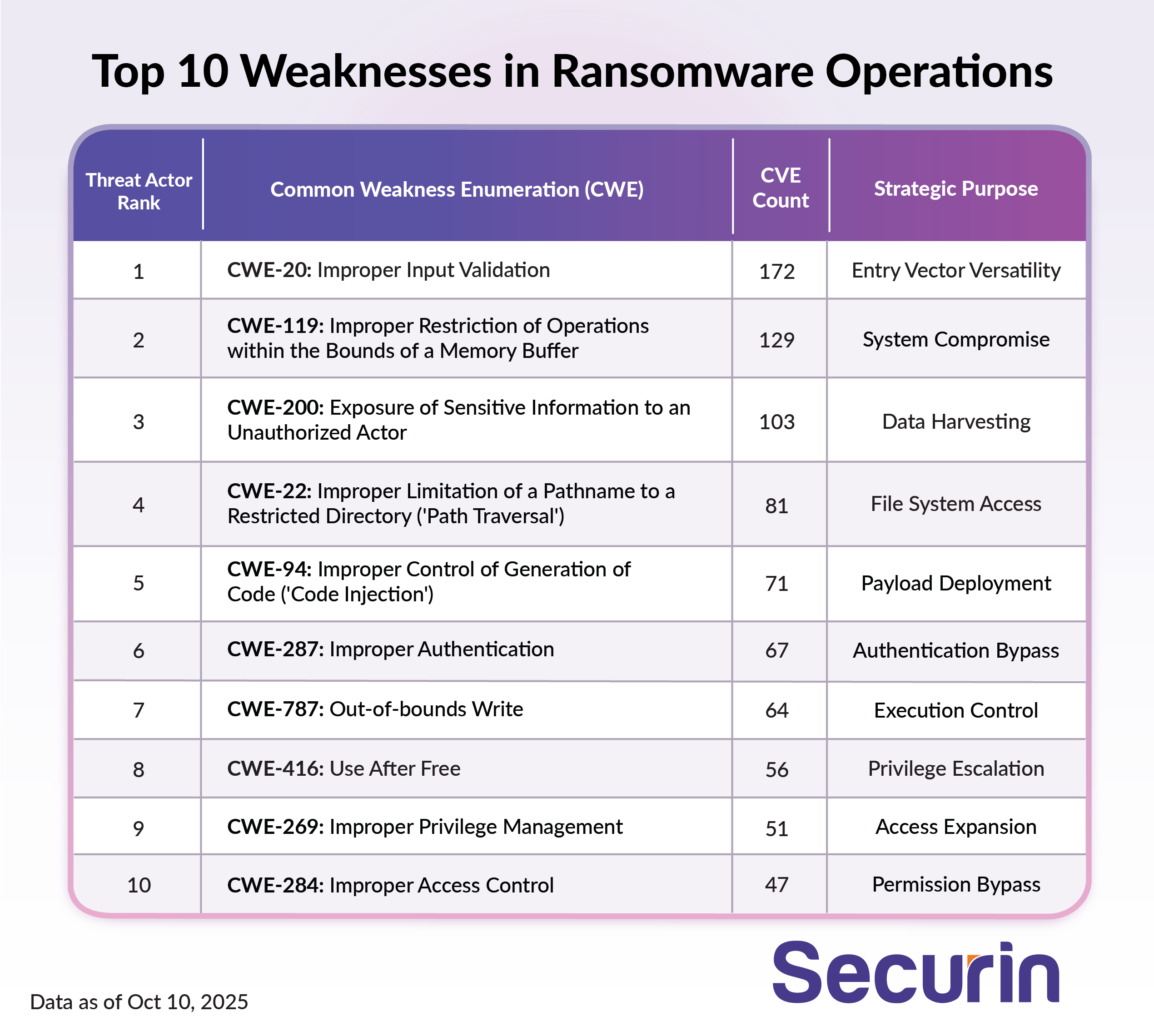

While software weaknesses are exploited by both threat actors and ransomware groups, they both operate distinctly. The image below contrasts their motivations, tactics, and target preference, pinpointing how motivation drives different exploitation strategies.

The table below shows that, despite differing motivations—opportunistic exploitation versus targeted monetization—both threat actors and ransomware groups target the same weaknesses. Addressing these vulnerabilities as a priority will help organizations secure themselves on all fronts.

Threat Actors (Advanced Persistent Threats Groups)

Sophisticated threat actors focus on memory corruption and input validation weaknesses to gain deep, long-term access:

• Input Validation Failures: Weak checks allow attacks like injections, buffer overflows, or authentication bypasses, enabling stealthy RCE, as seen in Anthropic MCP Inspector’s missing authentication flaw (CVE-2025-49596, CWE-306 Missing Authentication for Critical Function).

• Memory Corruption: Provides low-level system access, letting attackers plant hidden code, persist in system kernels, or evade detection.

• Privilege Inheritance: Attackers gain the rights and trust of compromised processes, amplifying control across systems.

Ransomware Groups

Ransomware actors prioritize fast profit through data theft or system lockdowns. Their strategies include:

• Information Exposure: Accessing sensitive data, as in Hugging Face Smolagents’ sandbox escape (CVE-2025-5120, CWE-284 Improper Access Control), enables “double extortion,” demanding payment for decryption and preventing data leaks.

• Business Model Evolution: Even if encryption fails or victims refuse to pay, stolen data (e.g., corporate secrets via EchoLeak, CVE-2025-32711, CWE-77 Command Injection) is sold or exposed for profit.

• High-speed Impact: Attackers optimize for rapid exfiltration and strategic advantage.

Together, these tactics show how ransomware groups and threat actors are adapting AI weaknesses into faster, more profitable attack models.

Input sanitization, access controls, and secure deserialization, mapped to CWEs like CWE-306 in Anthropic MCP (CVE-2025-49596), form a robust defense against APTs and ransomware exploiting AI weaknesses. These priorities, grounded in root cause analysis, strengthen secure-by-design AI systems.

How can we build on these defenses to fortify AI’s future? Find out in Part 3’s defense strategies on securing AI.