The Root Cause of AI Weaknesses: From Hidden Flaws to Secure-by-Design

Shireen Pereira

Securin Team

Sep 18, 2025

An AI assistant summarizing an email could silently leak your data—no clicks required. In June 2025, the EchoLeak vulnerability (CVE-2025-32711) in Microsoft 365 Copilot proved it, using a zero-click flaw that went undetected for months. More than a breach, EchoLeak exposed a deeper truth: AI doesn’t just inherit old software weaknesses—it amplifies them.

In this three-part Root Cause of AI Weaknesses series, Securin draws on 25 years of software vulnerability research and the latest findings in AI frameworks, to trace both inherited and AI-native flaws, back to their underlying weakness classes using Common Weakness Enumeration (CWE)—a global standard for categorizing software flaws. We map how these weaknesses shape AI’s attack surface, reveal patterns behind the recent surge in generative AI vulnerabilities, and deliver actionable intelligence to eliminate risks at their source. The goal: help organizations work securely with AI today and define the weaknesses that must be addressed to lay the foundation for truly secure-by-design AI.

- Hidden Flaws Lurking Beneath AI Foundations

- Scaling AI Through Exploding Risks and Evolving Attacks

- Securing AI with Root-Level Fixes

Root Cause Analysis - Part 1: Hidden Flaws Lurking Under AI Foundations

Today, AI is being built and adopted at record speed, making work faster and easier—but also riskier. That speed has stretched the attack surface, making it broader and easier to exploit. Long-standing flaws like improper input validation (CWE-20) and path traversal (CWE-22) reappear inside AI frameworks, while new weaknesses unique to AI are creating fresh paths for attackers. Together, they multiply AI’s risks and create a surge of generative AI vulnerabilities in 2025.

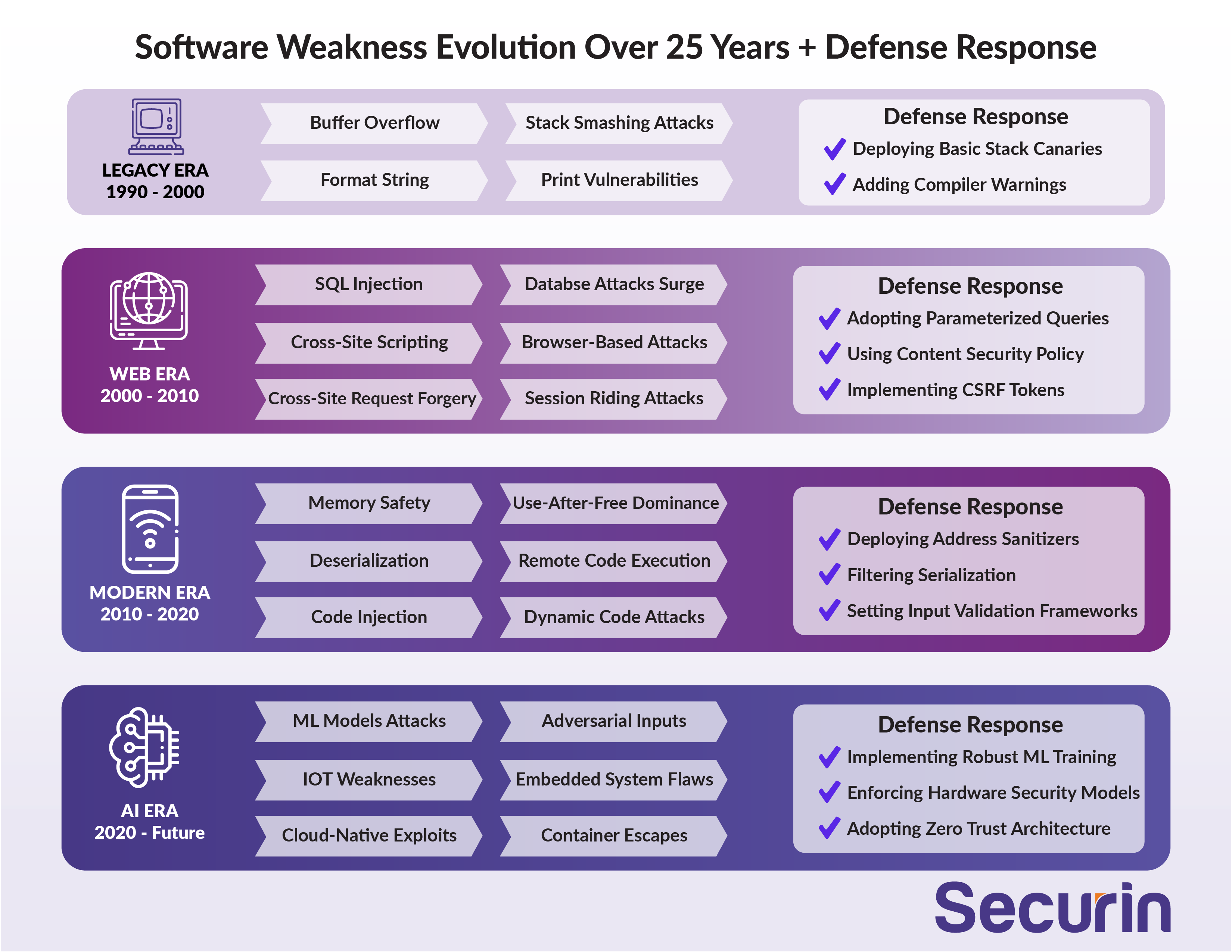

The image below lists the top weaknesses seen across 25 years of software vulnerabilities from 1990 to the AI era alongside what defenses should already be in place to deal with them.

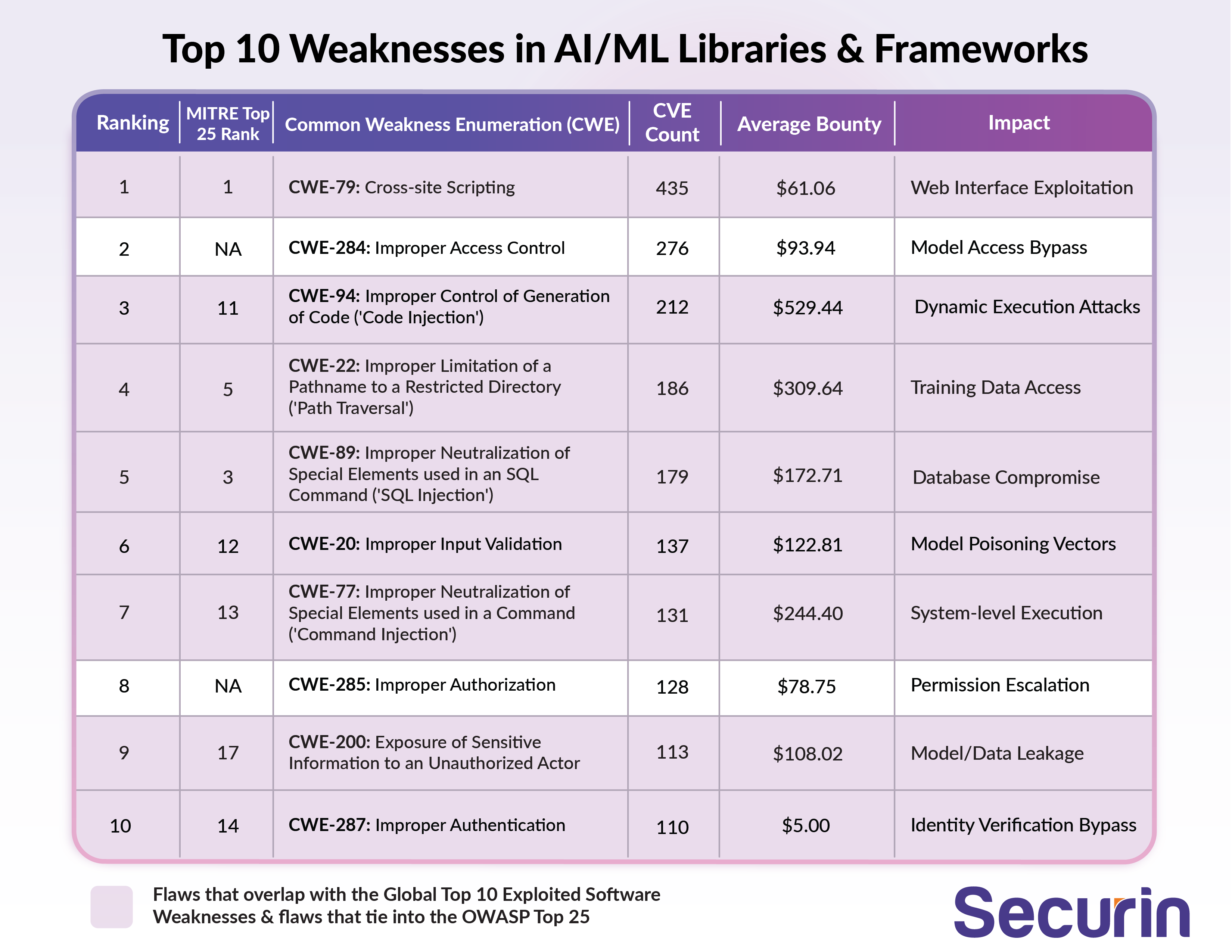

As part of our root cause analysis, we highlight the top 10 exploited CWEs ranked by CVE (Common Vulnerabilities and Exposures) count—key weaknesses that attackers repeatedly target. Securin recommends eliminating these flaws first, before deploying AI in any form.

The New Attack Surface: AI Overlap

In May 2025, a major security flaw rocked the AI world: CVE-2025-3248, discovered in Langflow, a popular Python framework for building LLM-powered applications. The bug stems from missing authentication in the /api/v1/validate/code endpoint, allowing attackers to send crafted POST requests with malicious payloads that the server executes unchecked. This opens the door to full server takeovers, theft of sensitive data like API keys and training datasets, or even malware deployment such as the Flodrix botnet—threatening the integrity of entire AI pipelines. It’s a stark reminder that even cutting-edge AI systems can fall to old-school security gaps.

Our table of the top 10 AI weaknesses, highlighted in purple across software vulnerability lists in this series, shows just how often these issues pop up. Five of these flaws overlap with the Top 10 most exploited CWEs, and eight tie into the OWASP Top 25, proving attackers are repurposing traditional tricks to target AI. Platforms like Huntr.Dev are buzzing, with bug hunters earning over $500 on average for spotting AI-related vulnerabilities, underscoring the real-world stakes of these threats.

Securin’s analysis identifies these overlapping flaws as core risk factors that organizations must fix as a priority. It also highlights a strong shift in attack economics. For instance:

- Code injection (CWE-94) now tops Huntr.dev bounty charts ($529.44 avg.) because it can expose AI models, data, and IP worth millions. With generative AI automating exploits and phishing, adversaries increasingly prize dynamic code execution within ML pipelines.

- Frameworks show 452 CVEs in XSS (CWE-79), which is one of the most common inherited flaws from traditional software. It creates a larger, more exploitable attack surface when AI is integrated.

How AI/ML Weaknesses Have Supercharged Cyberattacks

AI/ML vulnerabilities create high-value targets, exposing models, datasets, and infrastructure. When chained, these flaws—especially in Large Language Models (LLMs)—trigger cascading exploits and amplify risks across systems. Below, we outline key vulnerabilities, highlighting their impact on AI-driven systems.

High-Value Targets: Compromised AI systems expose proprietary datasets, model architectures, and compute resources. For instance, a Code Injection (CWE-94, Improper Control of Generation of Code) vulnerability in Lunary’s AI toolkit (CVE-2024-7474), disclosed in August, 2024, allowed attackers to steal sensitive data like model weights, risking IP loss across organizations.

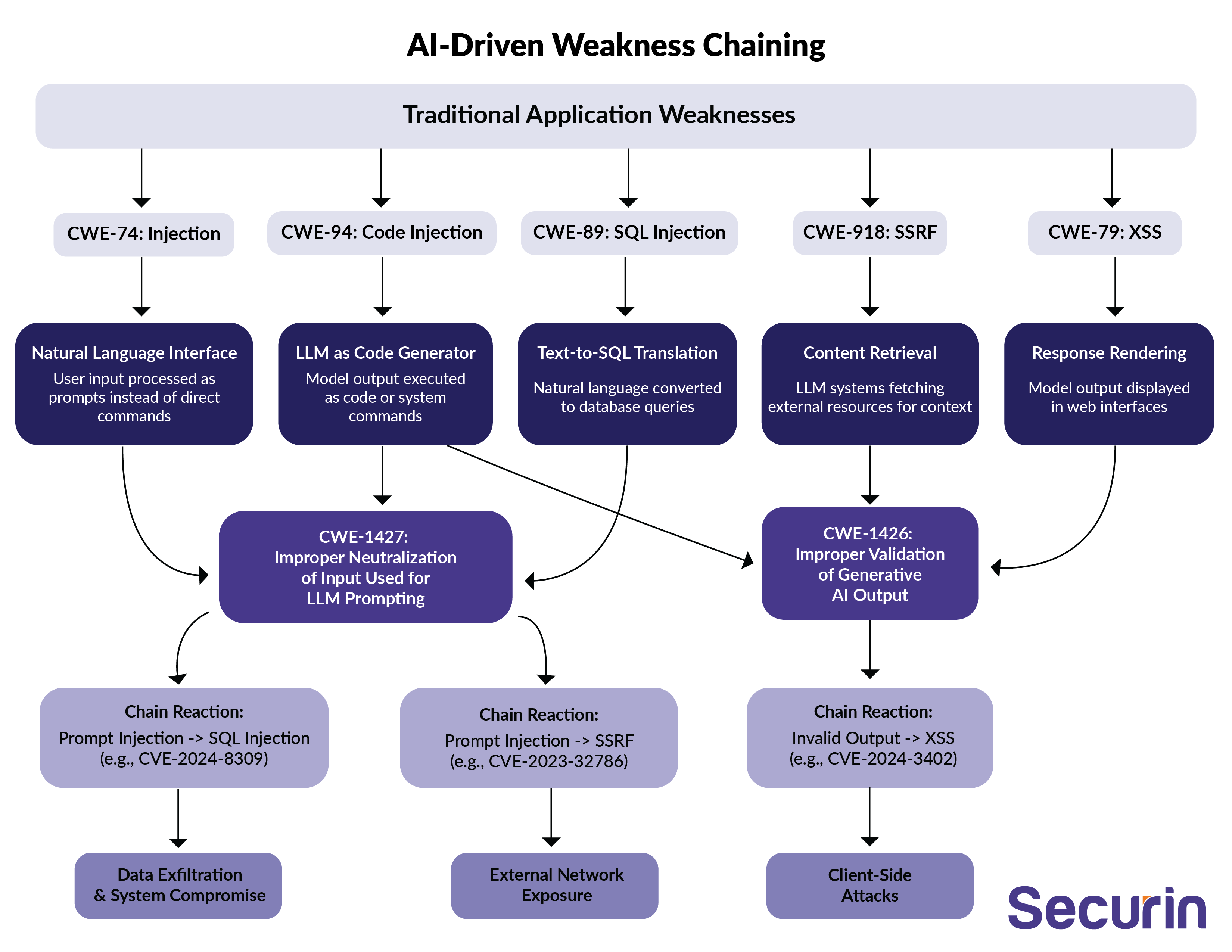

AI-Driven Weakness Chaining: LLMs enable semantic attacks, repurposing traditional flaws like Injection (CWE-74, Improper Neutralization of Special Elements in Output), Code Injection (CWE-94), and SQL Injection (CWE-89, Improper Neutralization of Special Elements in SQL Queries). A CWE-89 flaw in SharePoint (CVE-2023-29357), exploited through 2024, enabled unauthorized database access, risking AI-driven text-to-SQL breaches. LLM outputs have the ability to propagate secondary exploits, escalating single flaws into multi-layer attacks. The image below illustrates how these overlapping flaws interact to expand the attack surface and escalate risk.

Amplified Attacks: AI’s capabilities, like code generation and text-to-SQL, magnify vulnerabilities. A CWE-79 (Cross-Site Scripting, Improper Neutralization of Input During Web Page Generation) flaw in , exploited through 2024, enabled phishing and credential theft, amplified by AI interfaces processing web inputs.

Emerging Vulnerabilities in Generative AI Frameworks: A 2025 Wake-Up Call

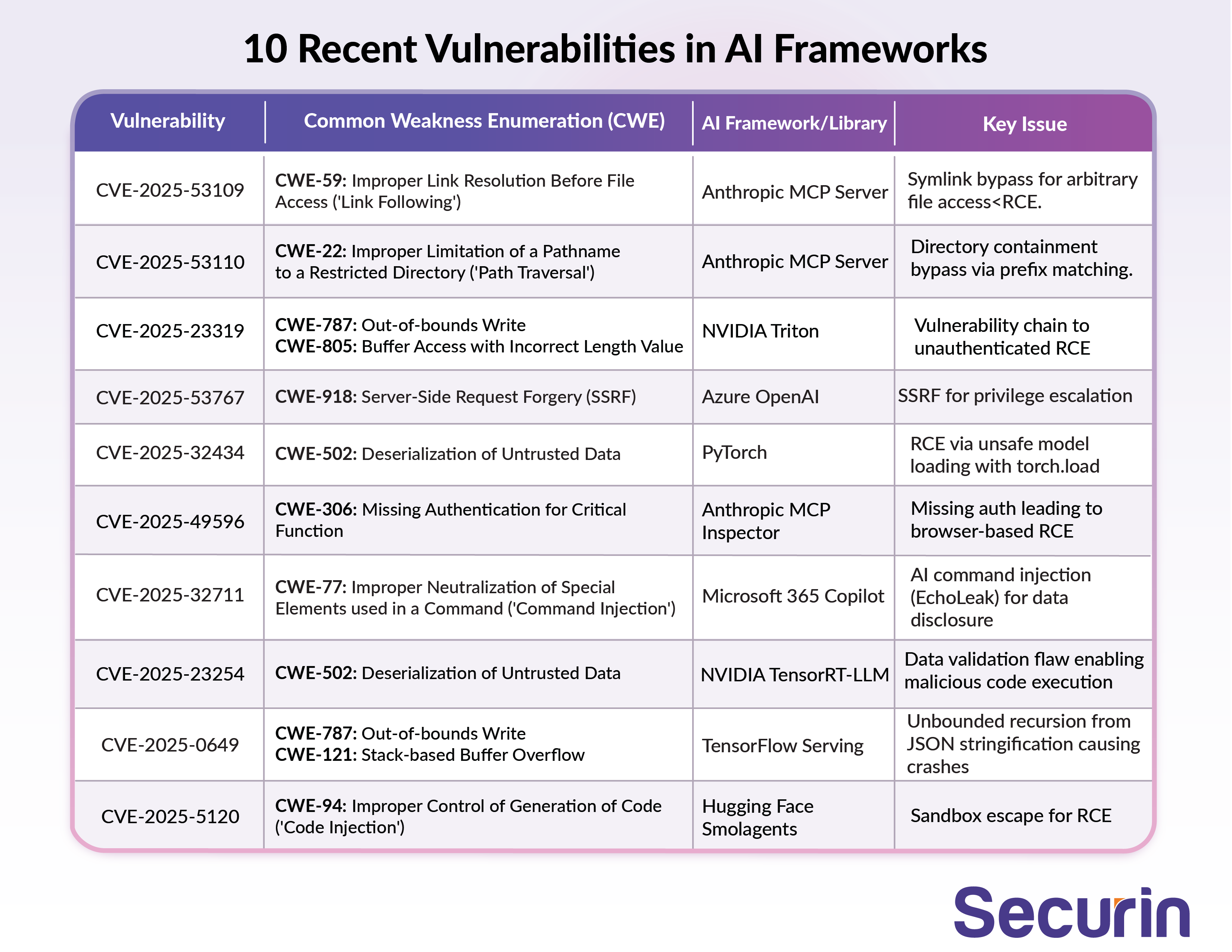

As generative AI frameworks like PyTorch, Hugging Face, and NVIDIA's tools power everything from chatbots to enterprise models, security flaws are proliferating. In 2025 alone, we've seen a spike in critical CVEs exposing AI systems to remote code execution (RCE), data theft, and privilege escalation. Below is a snapshot of 10 recent CVEs in AI frameworks, drawn from security disclosures, highlighting risks in popular libraries.

These vulnerabilities underscore the fragility of AI ecosystems, where untrusted inputs and deserialization often open doors to attacks. These weaknesses don’t exist in isolation—they connect back to long-standing software flaws that AI frameworks inherit and magnify.

Securin’s 25-year analysis reveals how pre-existing software flaws like improper input validation (CWE-20) in AI/ML libraries such as PyTorch persist, supercharging cyberattacks and threatening secure-by-design AI systems. Root cause analysis with CWE uncovers these hidden risks, from legacy systems to modern frameworks.

What happens when these flaws fuel generative AI’s 2025 surge? Join us in Part 2 for a critical wake-up call about the risks involved when using AI without securing your systems first.