AI Red Teaming: Are Your AI Models Secure?

Pamela Weaver

Securin Team

July 25, 2025

Attack Your AI. Before Someone Else Does.

Is your AI secure in production? Or are you guessing?

Don’t trust. Test.

AI and GenAI models aren’t toys, they’re infrastructure. They’re writing code, interacting with customers, generating investment data… And yet most organizations stop testing the moment models go live. And that’s where the real risk begins.

And that’s at the lower-end of the possible impact. AI systems are increasingly deployed in settings like healthcare, energy, transport – where mistakes are literally a matter of life and death.

Pre-launch security testing is a great start. But it doesn’t reveal how AI models respond under pressure. Once your system is live — responding to unpredictable queries, handling sensitive business logic – new vulnerabilities emerge. That’s the inference layer – and that’s where your AI is most exposed.

Are you ready to see what your AI model is really doing? Welcome to the inference layer. It’s where things get real for AI security.

Lock Before You Load

You’ve got static evaluations. A few filters. Maybe a pre-launch Q&A script. And then you hit “Go!” and your AI model is out in the wild, facing prompts and queries. Some from curious users, others from people actively seeking to break it.

This operational phase – the “inference layer” – is distinctly different from the development phase. It’s when your AI system is:

• Live and operational

• Connected to real data and business systems

• Actively processing user requests and questions

• Making decisions, and generating responses in real-time

At this point, AI systems are vulnerable in ways that static testing doesn’t reveal:

- Live AI systems are vulnerable to manipulation: Deployed AI systems can be tricked into revealing sensitive data, harmful content or bad decisions – even systems that have passed all pre-deployment testing.

- Security testing can’t keep pace with real-world AI threats: Traditional security testing is manual, inconsistent, and can’t keep up with the thousands of ways people interact with AI systems daily.

- AI security incidents are damaging, and expensive to resolve: The consequences of an AI system ‘misbehaving’ in active use are immediate and can damage reputation, customer trust, and incur regulatory penalties.

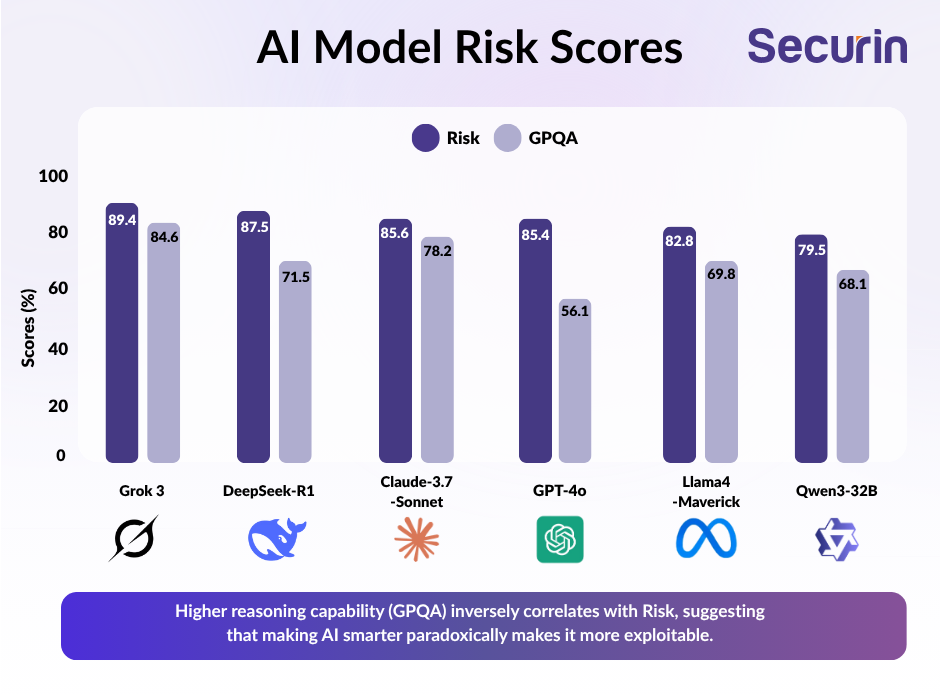

In fact, the smarter they are, the more vulnerable AI systems are to attack, as Securin research shows:

Bottom line: Pre-launch testing doesn’t catch these failures. It can’t. Why? Because what breaks an AI model isn’t always visible in development. It only surfaces when the model is prodded, tricked, coaxed over time. That’s what attackers do. And that’s why red teaming exists.

Securin’s Approach to AI Red Teaming: Prove Your AI is Safe

AI red teaming is the difference between hoping your model is safe, and knowing. It takes an attacker’s view of your model, and simulates adversarial tactics. It keeps probing until it succeeds – or proves your AI is genuinely resilient.

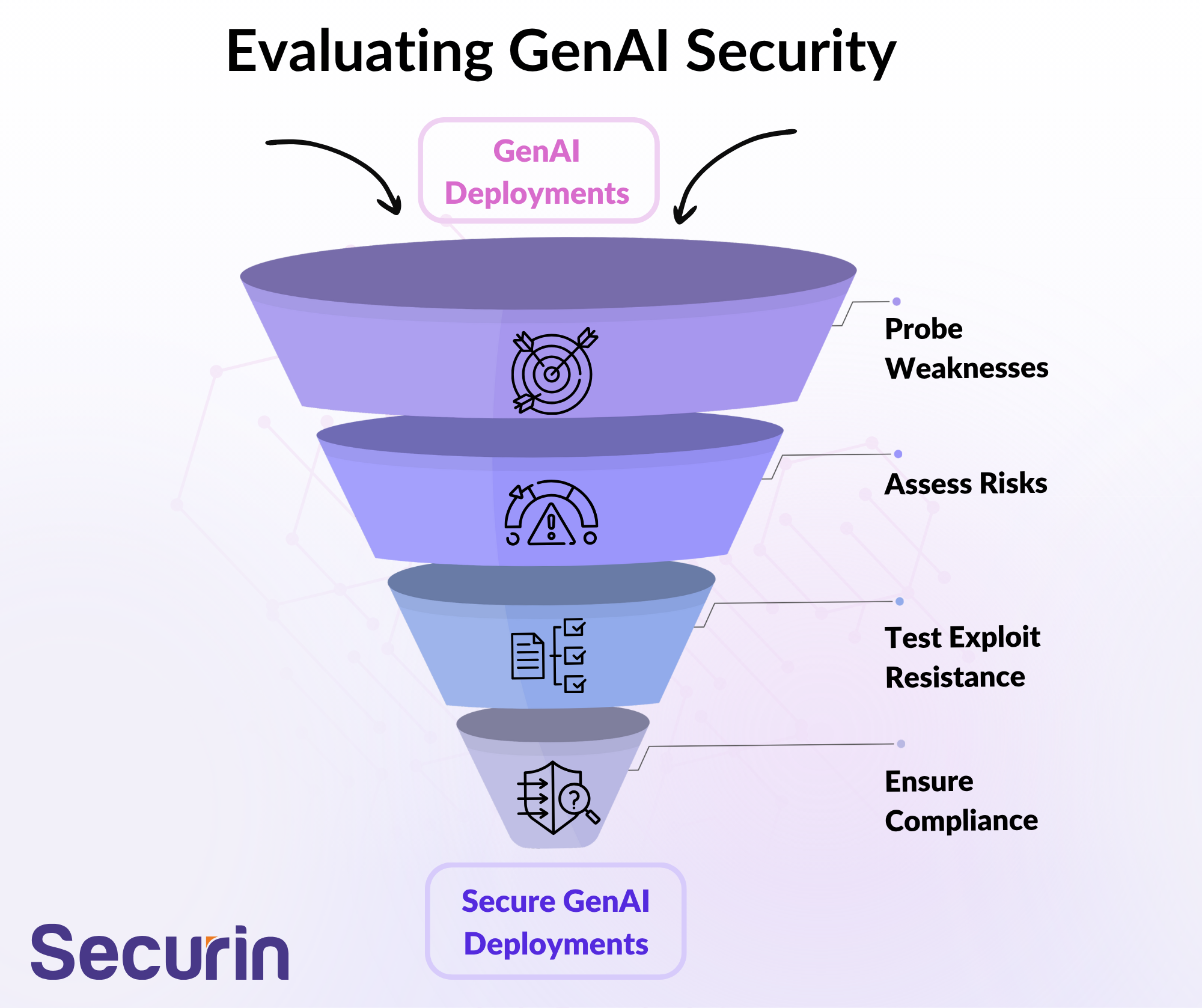

At the core of Securin’s approach to AI pen testing is a repeatable, transparent methodology designed with one purpose in mind: exposing AI vulnerabilities before attackers can. Built for the realities of operational AI systems, it has four key phases:

1.Reconnaissance and Configuration

Identify your target model, configure adversarial agents, define the behaviors you want to test (e.g. leaks, missteps, hallucinations), and set the scoring systems in place.

2.Attack Execution

Securin’s technology first launches static attacks – known, high-risk prompts such as injections, role-play exploits, and encoding tricks. This is followed with dynamic attacks – orchestrated conversations from adversarial LLMs that adapt and escalate based on how your model responds. Think less “Can we break it?” and more “How far can we push this before it breaks?”

3.Vulnerability Analysis

Every response is scored, with jailbreaks clearly flagged when a risk score exceeds 0.7. Patterns emerge, and you begin to see not just if a model fails, but how, where, and why. These insights go far beyond surface-level metrics to show systemic weaknesses and gaps in guardrails.

4.Documentation and Reporting

No more black boxes: Securin’s approach generates full transcripts, risk dashboards and visual summaries, along with detailed recommendations for remediation. This gives teams actionable insights and evidence that’s CISO-friendly or to include in audits.

These four phases provide a comprehensive view of AI model behavior under real-world pressure, from how attacks are crafted to how responses are captured, interpreted and acted on. To take those insights to the next level, you need a scoring system that’s as rigorous as the testing. Securin’s structured evaluation framework helps with this. Let’s take a look.

Metrics That Matter: Scoring You Can Trust

Pen testing is only as good as your ability to learn from it, and build resilience. Securin’s approach doesn’t just generate attack logs, it applies a structured, auditable scoring system that makes every result actionable:

• Jailbreak Success Rates: How often do attack attempts actually succeed in breaking a model’s intended behavior? With scoring, you don’t just know that something failed, but how severely.

• Vulnerability Patterns: Are certain types of prompt consistently getting through? Is there a recurring weakness in how your model handles hypothetical scenarios or technical workarounds such as encoded inputs? Securin identifies these patterns, so you can fix root causes, not just patch individual failures.

• Defense Resilience: Any model can refuse a request once, but how does it handle persistence? Rephrasing? Subtle escalation over a multi-turn dialog? This metric shows you how well your AI holds up in real-world scenarios.

• Static vs Dynamic Resistance: Some weaknesses only emerge under sustained, adaptive pressure. Others fold at the first well-crafted prompt. Securin’s approach doesn’t treat all attacks the same, this approach helps teams distinguish between superficial compliance and structural robustness.

Taken together, these metrics feed into a broader understanding of AI model maturity and deployment readiness. They’re traceable, repeatable, and - importantly - can be compared over time or between models. These risk indicators help teams to decide whether or not a model is ready for production, what kind of uses cases it’s safe to handle, whether additional training is required, and how any changes to the model affect resilience over time.

Why is this important? Because knowing where your AI model is vulnerable is only half the battle; fixing problems with clarity and transparency is crucial.

Why AI Red Teaming Matters

In a world where AI decisions are being made in production environments, red teaming is more than just a technical exercise, it’s a business safeguard. Failure to test AI models properly has significant potential consequences: regulatory, reputational, financial, operational. Just some of the reasons why AI pen testing is crucial:

• Prevent real-world failures before they hit production.

• Expose vulnerabilities that pre-launch QA never sees.

• Lower risk, avoid penalties, build and maintain trust.

• Give stakeholders the data they need.

Ultimately, if your AI models are in production, they’re in play. And if they’re in play, they’re under pressure and at risk. Securin’s approach gives you the insight – and data – you need to meet that pressure head-on.

What’s next? We’ll take a look at the different types of AI attack, and what you can do to mitigate them. In the meantime, to learn more about how Securin approaches AI security, check out our AI “Nutrition Label” initiative.